Connection Handling & Timeouts Explained

Why Applications Fail Even When Servers Are Up

1. Problem Statement

You open an application.

Everything looks fine:

Servers are UP

Health checks are passing

But users still see:

502 Bad Gateway

504 Gateway Timeout

This creates confusion: 👉 “If servers are UP, why are users failing?”

The answer lies in connection handling and timeouts.

2. Concept Explanation

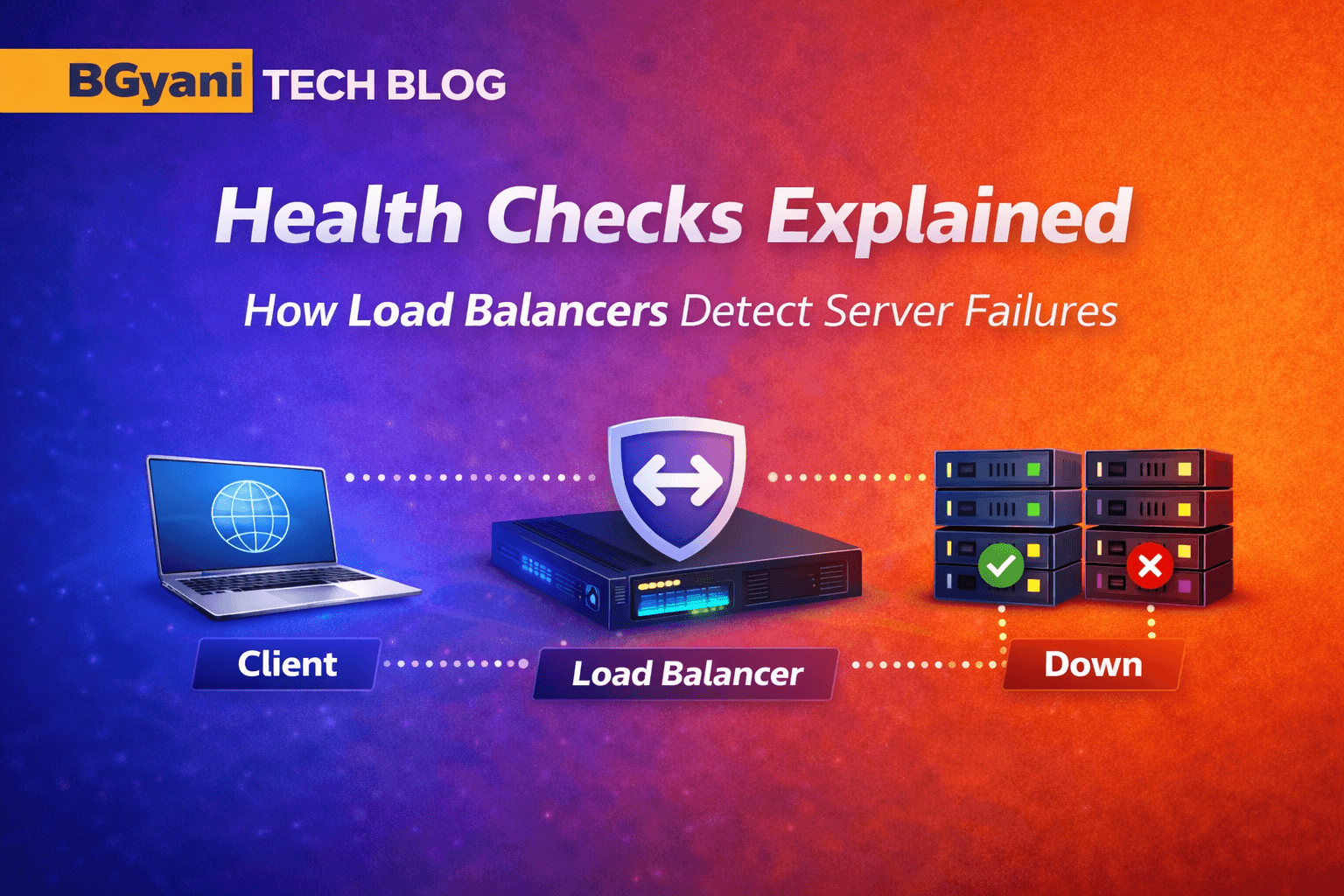

What is a Connection?

A connection is a communication path between:

Client (browser/app)

Load Balancer

Backend Server

Think of it like a phone call:

You dial → connection established

You wait → response expected

What is a Timeout?

A timeout is: The maximum time a system waits for a response

If no response is received within that time: The connection is terminated

Why Timeouts Exist?

Without timeouts:

Systems would wait forever

Resources (connections, memory) get exhausted

Entire system becomes slow

Timeouts protect:

Performance

Stability

User experience

3. Types / Variations

Client Timeout

- How long the client waits for a response

Load Balancer Timeout

- How long LB waits for backend response

Server Timeout

- How long server waits for internal processing

Idle Timeout

- Connection closed if no activity

Response Timeout

- Maximum time allowed for full response

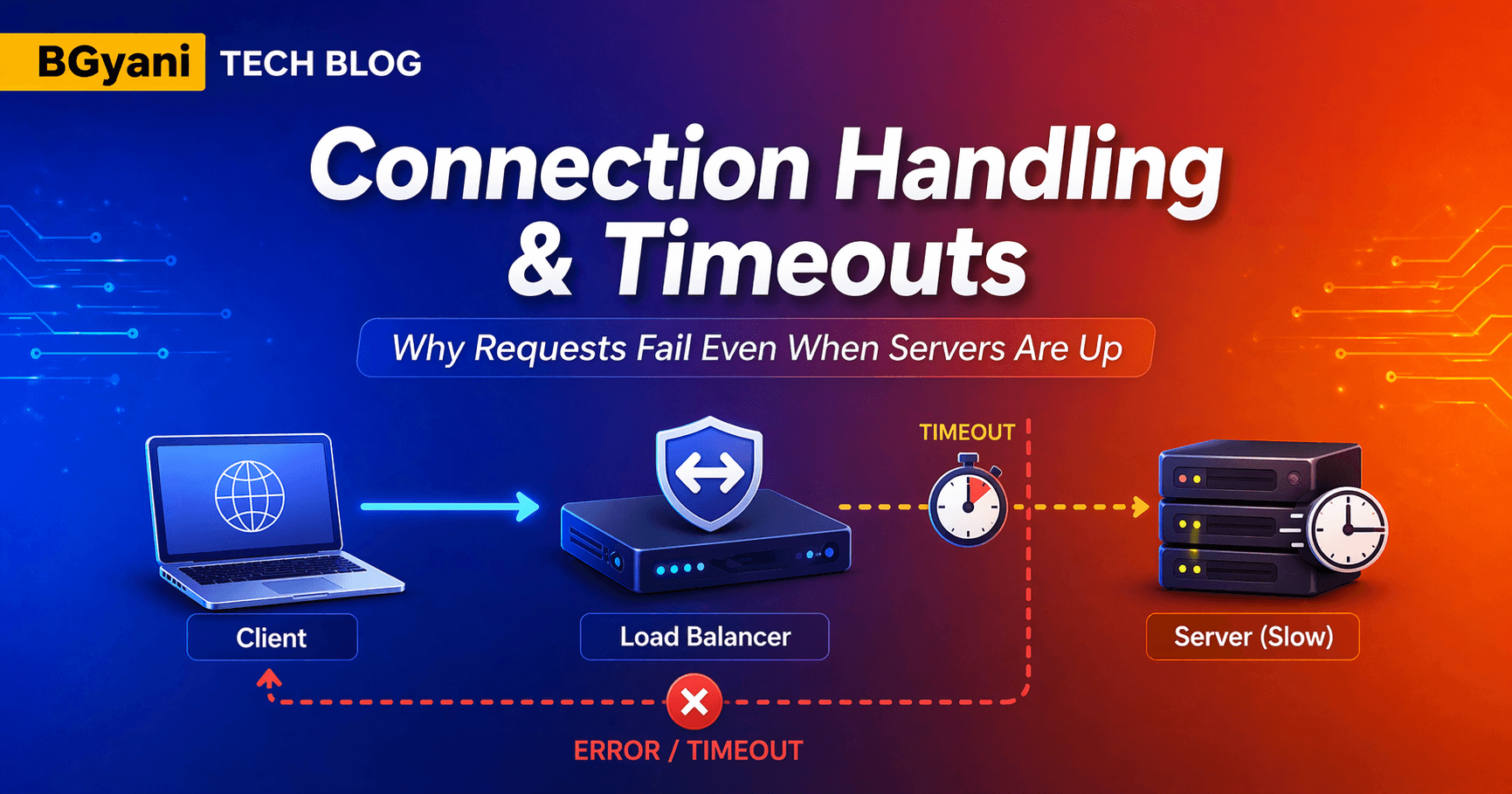

4. How It Works Internally

Step-by-step flow:

Client sends request

Load Balancer receives request

LB forwards request to backend server

Server processes request

Scenario 1 — Success

Server responds within timeout

LB forwards response to client

👉 Result: Successful request

Scenario 2 — Failure

Server is slow

Response exceeds timeout

👉 LB drops connection

👉 Client receives error (502 / 504)

Optional: Retry Behavior

Some systems retry:

Can help in transient failures

But excessive retries can worsen load

5. Diagram

Diagram: loadbalancer-Connectiontimeout-diagram.png

6. Real-World Example

Imagine a payment system:

User clicks “Pay”

API calls backend service

Backend waits for database response

Now:

DB is slow (heavy load)

Response takes 12 seconds

LB timeout is 10 seconds

👉 Result:

LB closes connection

User sees 504 error

Payment may or may not be processed

7. Common Issues / Pitfalls

Timeout Mismatch

Client: 30s

LB: 10s

Server: 60s

👉 LB kills request early

Too Aggressive Timeout

- Causes unnecessary failures during peak load

No Retry Strategy

- One failure = user failure

Misleading “Server is UP”

Health check passes

But application is slow

👉 UP ≠ Healthy

8. Try It Yourself

Try it yourself 👇

👉 ConnectionTimeout Visualizer

9. Key Takeaways

Timeouts prevent systems from hanging

Server being UP does NOT mean fast response

Timeout mismatch is a common production issue

Proper tuning improves reliability

10. Conclusion

Connection handling is not just about routing traffic.

It’s about: How long you are willing to wait

Even a healthy system can fail:

- If it responds too late

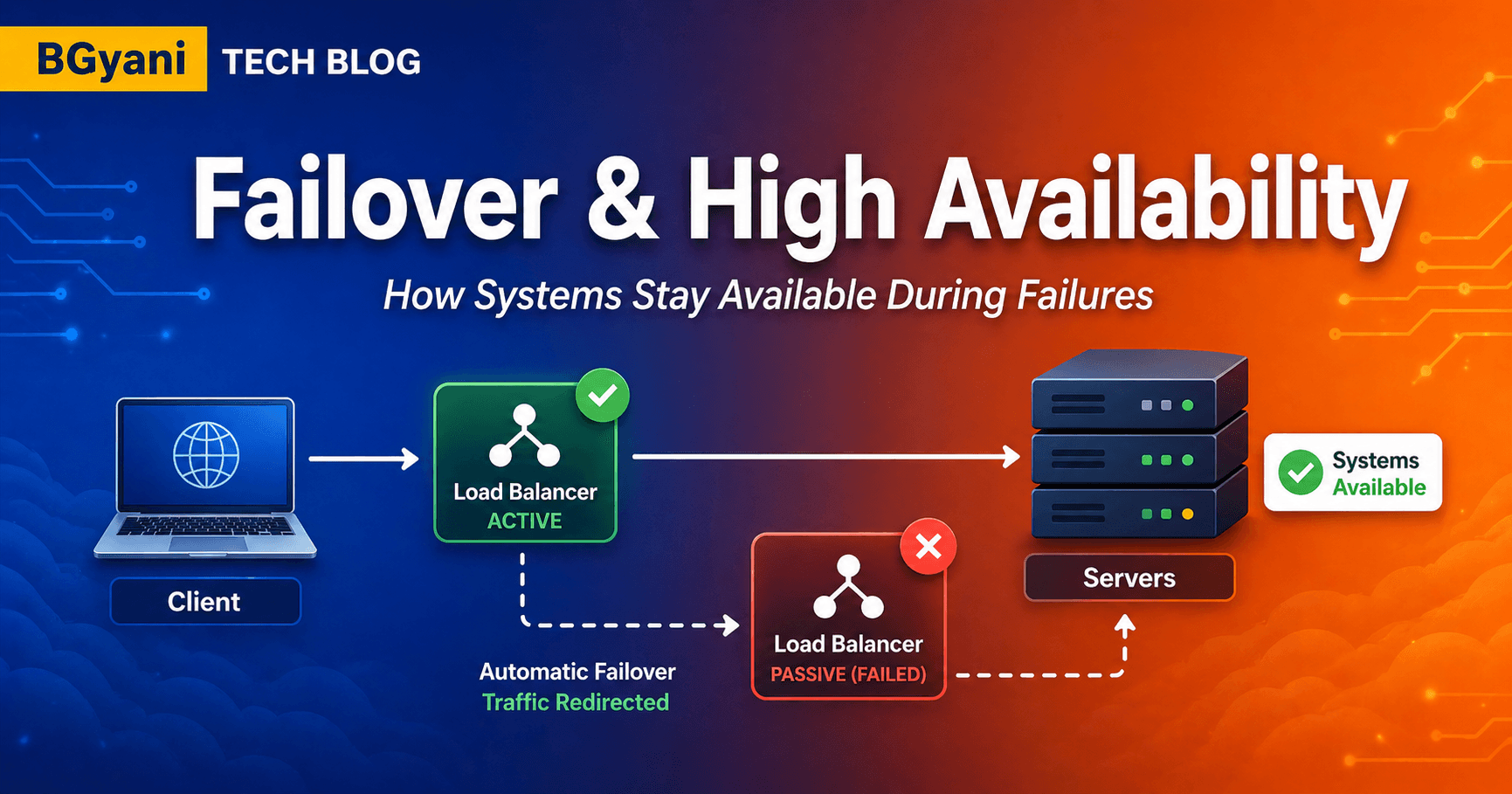

11. Series Continuity

Previous: Failover & High Availability

Next: SSL Offload Explained

12. Final Thought

In production:

👉 “Users don’t care if your server is UP

They care if it responds on time.”

13. Practical: NetScaler Hands-on

13.1 Mini Lab

Create LB vServer

Add backend service

Simulate delay (slow response)

Observe timeout

13.2 Variation / Experiment

Change timeout value

Observe:

Errors

Response success rate

13.3 Commands

show lb vserver

# Check LB vserver state and configured timeout

# Useful to confirm if LB timeout is too low

show service

# Check backend service state and response metrics

# Helps identify if server is slow but still UP

set lb vserver <vserver\_name> -timeout

# Configure response timeout at LB level

# If backend takes longer → LB will drop connection

set ns param -timeout

# Global TCP timeout setting (affects connections platform-wide)

# Important when multiple apps share same ADC

set service <service\_name> -maxReq

# Limits number of concurrent requests to a server

# Prevents overload → reduces slow responses → fewer timeouts

#Example

#View LB vServer configuration and timeout settings

show lb vserver

#Check backend service state and response behavior

show service

#Configure LB timeout to 10 seconds

set lb vserver -timeout 10

#Configure backend server timeout

set service -svrTimeout 15

#Save configuration

save config